Lately, we have been experimenting with chatbot developments. Every day we have been progressing with- speed/ ease/ search-quality or the other thing. Adding a high-level summary here so the larger audience can benefit.

Build the Ask Zeneral chatbot first with sample data

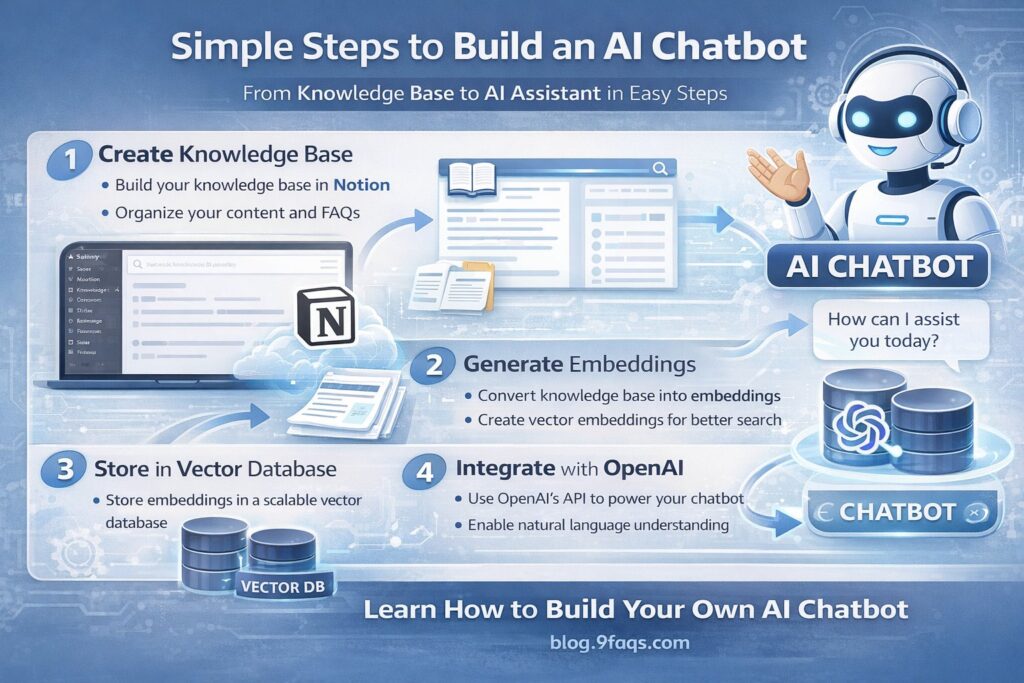

Create a Small Sample Knowledge Base-Notion or even simple text files initially.

Convert This Content Into Embeddings.Each document will be converted into vector embeddings.

Text document

↓

Embedding model

↓

Vector representation

↓

Stored in vector databaseIf the sample data is already in Notion, the next immediate step is to connect that data to the AI system so the chatbot can read, embed, and search it.

Turn your Notion content into searchable AI knowledge.

Get Notion API Access;

Give the Integration Access to Your Pages,

Extract Notion Content

from notion_client import Client

notion = Client(auth="NOTION_API_KEY")

response = notion.databases.query(

database_id="YOUR_DATABASE_ID"

)

print(response)Split Content Into Chunks

AI works better with small pieces of text. AI models cannot efficiently search large documents directly.

If we store this entire page as one document, problems occur:

- retrieval becomes inaccurate

- AI gets too much context

- answers become vague

Instead we split it.

Suppose your Notion page looks like this:

AI Services

Zeneral provides several AI solutions including knowledge assistants,

document intelligence, customer support agents, workflow automation,

data insights systems and market intelligence platforms.Knowledge Assistants

These systems allow employees to query company knowledge.

Document Intelligence

AI can extract structured data from documents such as invoices.

If this entire page is stored as one entry, then when someone asks:

“What is AI document processing?”

The vector search might return the whole page, which includes many unrelated topics.

AI then gets too much mixed information.

Instead we split the page-

Chunk 1/2/3

Now if the user asks:

What is AI document processing?

Vector search retrieves Chunk 3 only.

The AI receives exactly the right context.

Typical chunk sizes:

| Size | Words | When to use |

|---|---|---|

| Small | 100–200 | FAQs |

| Medium | 200–400 | best general size |

| Large | 500–700 | long technical docs |

Chunks should slightly overlap so meaning is not lost.

Split content by idea, not randomly.

Good chunks represent:

• one concept

• one explanation

• one use case

✅ Good chunking = smart chatbot

Bad chunking = confused chatbot

Designing the Notion structure correctly will make your chatbot much easier to build and maintain. If the structure is clean, chunking becomes almost automatic.

for a scalable chatbot knowledge base, a Notion database is much better.

A Notion database is like a spreadsheet + pages combined.

Example database entry:

Title

AI Document Processing

Category

AI Service

Content

AI document processing systems automatically extract

information from business documents such as invoices,

contracts and reports.These systems reduce manual work and improve

processing accuracy.

This becomes a perfect knowledge chunk.

✅ Python can read your Notion database

✅ Title, Category, Context are extracted correctly

✅ Data is ready for the Zeneral knowledge base

a complete simple chatbot script that:

1️⃣ Reads your Notion database

2️⃣ Builds the Zeneral Knowledge Base

3️⃣ Sends it to the AI model

4️⃣ Lets you ask questions in the terminal

This gives you a working chatbot immediately (no vector DB yet).

pip install notion-client open

Yes — OpenAI requires authentication. The credential is an API key.

OpenAI APIs require billing enabled.Set a usage limit.

Go to:

Billing → Usage Limits

Never commit your API key to GitHub.

.env file

environment variablesNotion Database

↓

Python fetch

↓

Zeneral Knowledge Base

↓

AI Model

↓

Ask Zeneral ChatbotOnce this works, the next improvements are:

1️⃣ Add vector search

2️⃣ Add conversation memory

3️⃣ Deploy on zeneral.ai website

4️⃣ Add consultation lead capture

Notion database

↓

Python script

↓

Zeneral knowledge base

↓

OpenAI API

↓

AI-generated answer

Moving to a vector database is the right next step. This will make Ask Zeneral much smarter and scalable, especially when your knowledge base grows.

Notion database

↓

Create embeddings

↓

Store in vector database

↓

User question → embedding

↓

Vector search

↓

Retrieve relevant knowledge

↓

AI generates answerThis approach is called RAG (Retrieval Augmented Generation).

| Database | Difficulty | Notes |

|---|---|---|

| Pinecone | Easy | Most common for RAG |

| Supabase Vector | Medium | SQL + vectors |

| Weaviate | Advanced | full vector platform |

| Chroma | Very easy | local development |

pip install openai pinecone-client notion-clientEach knowledge entry must become a vector embedding.

Vector databases do not store text for reading.

They store semantic meaning.

✅ Once vector search works, your chatbot becomes a real production-grade RAG system.

understanding embeddings is crucial because this is the foundation of vector search and RAG chatbots like Ask Zeneral.

An embedding converts text into a list of numbers that represent its semantic meaning.

Why Embeddings Are Powerful

Because similar meanings produce similar vectors.Even though the words differ, the embeddings are close in vector space.Create Embeddings in Python

Using OpenAI embeddings API.

Yes — you noticed correctly 👍

Earlier LangChain came up in the discussion, but it’s important to understand the difference:

Embeddings are created by models (like OpenAI), not by LangChain.

LangChain is just a framework that helps orchestrate the steps.

| Provider | Embedding Model |

|---|---|

| OpenAI | text-embedding-3-small |

| Cohere | embed-english-v3 |

| textembedding-gecko | |

| HuggingFace | sentence-transformers |

What LangChain Actually Is

LangChain is a developer framework for building AI pipelines.

It helps manage things like:

documents

↓

chunking

↓

embeddings

↓

vector database

↓

retrieval

↓

LLM responseIt does not create embeddings itself.

Instead it calls embedding providers for you.

LangChain becomes useful when your system grows and you need:

• document loaders

• automatic chunking

• retrieval pipelines

• agent workflows

• multi-step reasoning

LangChain is optional.

Many large production systems use plain Python + APIs

Summary:

| Component | Role |

|---|---|

| OpenAI | creates embeddings |

| LangChain | manages AI pipeline |

| Pinecone | stores vectors |

| Your Python code | orchestrates everything |

LLMs are language models, not databases.

They do not automatically apply exact filtering logic unless prompted clearly.

Once you finish the Pinecone ingestion script, the next step will be the retrieval script,

Since ingestion to Pinecone is done, the next step is the retrieval + answer generation script. This script will:

1️⃣ Convert the user question → embedding

2️⃣ Search Pinecone vector database

3️⃣ Retrieve the most relevant knowledge entries

4️⃣ Send them to OpenAI

5️⃣ Generate the final answer

This completes the RAG pipeline for Ask Zeneral.

Notion Database

↓

Ingestion Script

↓

Embeddings

↓

Pinecone Vector DB

↓

Retrieval Script

↓

OpenAI Model

↓

Ask Zeneral ChatbotIf an image is uploaded into notion db, can the query in the chatbot look for answers from the image too?

Short answer: Yes, but not automatically with the current setup.

Your current pipeline (Notion → embeddings → Pinecone → RAG chatbot) only processes text. Images require an extra step to convert them into text or embeddings.

Notion database

↓

Fetch rows

↓

Check if image exists

↓

Extract image URL

↓

OCR / Vision model

↓

Convert image → text

↓

Create embedding

↓

Store in PineconeEnterprise RAG systems often support:

PDF

Images

PowerPoint

Web pages

Notion

Google DocsAll converted to text chunks before embedding.

Let us say I have data in excel sheets and want to add them to knowledge base so the chatbot can process the data and answer

Yes — Excel data can definitely be added to your knowledge base, and this is actually a very common requirement in AI assistants. The key point is:

The chatbot does not read Excel directly.

We convert Excel rows into text knowledge entries or structured metadata before creating embeddings.

Since you already have the pipeline (Notion → embeddings → Pinecone → retrieval), Excel can be integrated into the same ingestion pipeline.

Excel file

↓

Python reads rows

↓

Convert rows → text

↓

Create embeddings

↓

Store in PineconeIn the notion I added founder of zeneral. In the chatbot I asked for fonder. Unfortunately it was unable to give answer. Can the chatbot understand fonder is mispelled and should check for founder?

Yes — a well-designed RAG chatbot can usually handle misspellings like “fonder” vs “founder.” But whether it works depends on where the correction happens in the pipeline. In your case, the failure likely happened before the LLM step — during vector retrieval.

User question

↓

Create embedding

↓

Vector search in Pinecone

↓

Retrieve context

↓

Send context to LLM

↓

Generate answerSolution: Use a Query Rewrite Step

Before doing vector search, send the user question to the LLM to rewrite it clearly.

User question

↓

LLM query rewrite

↓

Create embedding

↓

Vector search

↓

Retrieve context

↓

Generate answer